Agentic AI Platform Selection

5-Dimension Evaluation Framework + TCO Model + Real Failure Cases

A leading hotel group spent 8 months evaluating four Agentic AI platforms. Their final choice enabled them to deploy a digital concierge across 19 countries and 12,000+ hotels in just 3 months. Meanwhile, a competitor in the same industry spent only 2 weeks on selection, chose an open-source solution with an impressive demo—and shut the project down 6 months later when production security, concurrency, and operational costs spiraled out of control.

An Agentic AI Platform is the core infrastructure for building, deploying, and managing autonomous AI Agents. Through intelligent reasoning, task planning, multi-agent collaboration, and end-to-end workflow execution, it enables AI to handle complex business processes with minimal human intervention. According to Omdia, the Asia-Pacific Agentic AI software market will surge from $271 million in 2025 to $9.7 billion by 2030—a staggering 105% CAGR.

But rapid market growth also means an overwhelming number of vendors, similar-sounding pitches, and real capability gaps hidden behind polished demos. This guide draws on data from both the Omdia Market Radar 2026 and IDC MarketScape 2025 reports, combined with real enterprise selection experiences, to present a 5-dimension evaluation framework for Agentic AI platform selection—helping CTOs and AI leaders make the right decision.

Executive Summary

Key Takeaways:

- Selection failures stem not from "picking the wrong features" but from "evaluating on the wrong dimensions"—demo performance ≠ production readiness

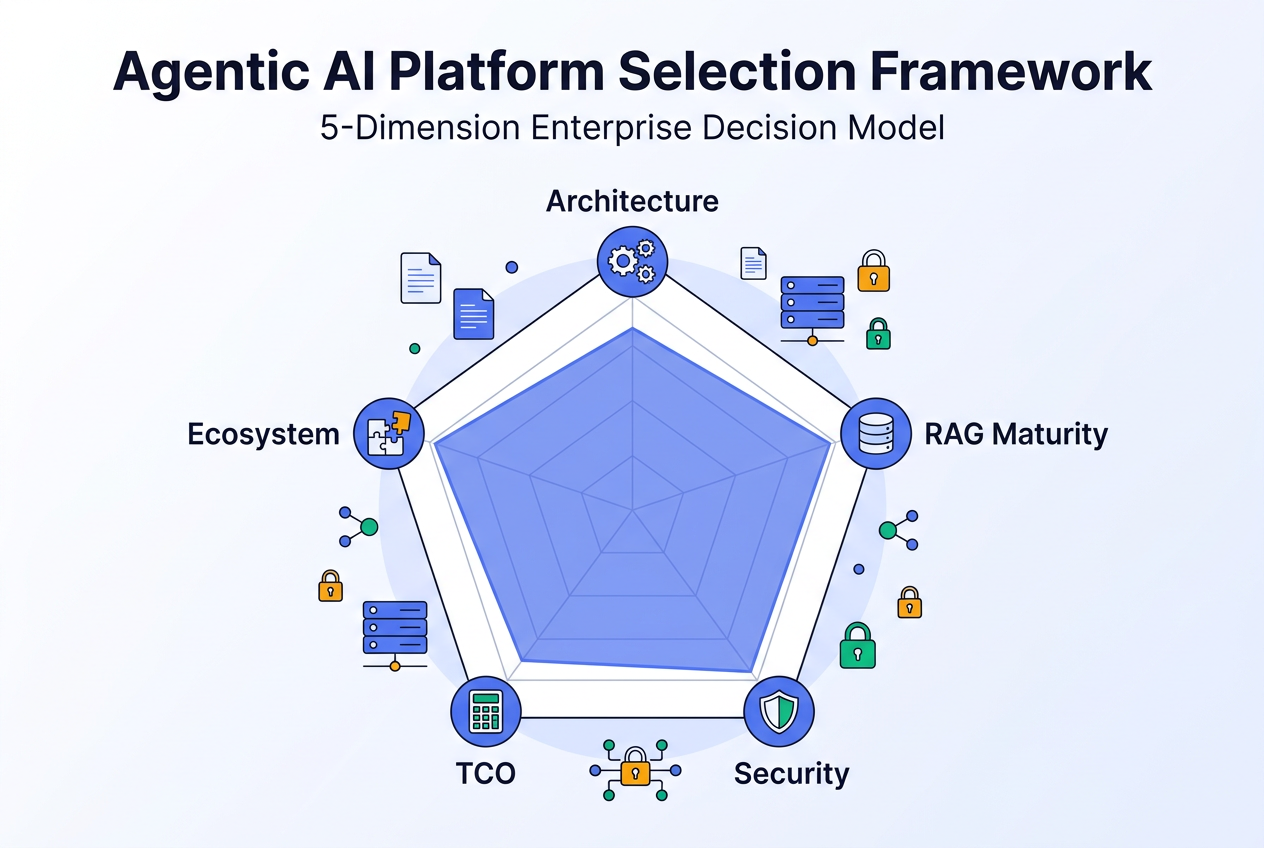

- The 5-dimension framework covers Architecture Flexibility, RAG Maturity, Security & Compliance, TCO (Total Cost of Ownership), and Ecosystem Integration

- In real Agentic AI costs, model inference accounts for only 30-40%; operations, integration, and personnel are the majority

- Cross-validation from Omdia and IDC confirms: full-stack capabilities + regional compliance are the critical weights for Asia-Pacific selection

- A downloadable scoring template is included for direct use in internal procurement decisions

Why 80% of Enterprise Agentic AI Selections Require Rework

Agentic AI platform selection is one of the highest-leverage decisions in enterprise AI strategy. Choose right, and your AI Agent projects can go live within weeks and deliver business value. Choose wrong, and your team may not discover the problems until 6-12 months later—by which time significant technical debt and sunk costs have accumulated.

According to Omdia Market Radar 2026 research, enterprises in the Asia-Pacific region face three systemic pitfalls in Agentic AI platform selection:

Pitfall 1: Demo-Driven Selection

Most selection processes start with "let the vendor demo." But demo environments and production environments are fundamentally different:

| Dimension | Demo Environment | Production Environment |

|---|---|---|

| Data Volume | 10-50 curated documents | 10,000+ mixed-format documents |

| Concurrency | Single-user testing | Hundreds to thousands of concurrent users |

| Document Formats | Clean PDF/Word files | Scanned documents, nested tables, multilingual content |

| Security | None | Data isolation, audit logs, compliance certifications |

| Operations | "If it runs, it's fine" | SLA, canary deployments, automatic failover |

A leading automotive manufacturer learned this the hard way: their initial chatbot demo achieved 90%+ accuracy in controlled testing, but in real customer environments—where product manuals were far more complex than expected, knowledge base cold-start delays were severe, and rule-based fallbacks couldn't understand nuanced intents—customer satisfaction dropped sharply and the project nearly stalled.

Pitfall 2: Feature Checklist Comparison

"Platform A has 50 features, Platform B has 80"—this comparison seems objective but misses two critical issues:

- Production maturity of features: Two platforms may both claim "RAG support," but one only offers basic vector search while the other provides GraphRAG + Agentic RAG + hybrid retrieval

- Synergy between features: A simple stack of independent features doesn't equal integrated capability. Workflow orchestration, multi-agent collaboration, and knowledge management need deep architectural integration

Pitfall 3: Ignoring TCO (Total Cost of Ownership)

Nearly every vendor quote covers only "platform subscription fees." But the real TCO structure of Agentic AI is far more complex:

| Cost Category | Typical Share | Why It's Overlooked |

|---|---|---|

| Model Inference | 30-40% | This is what vendors proactively share |

| Infrastructure | 15-20% | Vector databases, storage, networking |

| Integration Development | 15-25% | Custom development to connect existing systems |

| Operations & Personnel | 10-15% | Knowledge base maintenance, model tuning, troubleshooting |

| Security & Compliance | 5-10% | Certifications, auditing, data governance |

One dairy company's AI copywriting agent consumed 30 million tokens daily. If the selection phase only compared "price per thousand tokens" without considering prompt optimization, caching mechanisms, and cost attribution tools, the monthly bill could exceed budget by 200%.

The 5-Dimension Evaluation Framework

This 5-dimension platform selection framework integrates Omdia Market Radar's 7 capability dimensions and IDC MarketScape's dual-axis model, focused on the areas where enterprises most commonly stumble during selection.

Dimension 1: Architecture Flexibility

Architecture flexibility assesses whether a platform can adapt to evolving AI Agent needs—from simple single-agent Q&A to complex multi-agent business orchestration.

Key Evaluation Criteria:

| Criteria | Baseline (Pass) | Advanced (Bonus) |

|---|---|---|

| Workflow Orchestration | Drag-and-drop visual builder | Visual + code node hybrid orchestration |

| Multi-Agent Framework | Agent-to-agent message passing | Planning-execution separation, free handoff, and workflow routing |

| Model Support | Compatible with mainstream LLMs | Mixed proprietary + third-party models with dynamic routing |

| Deployment Options | SaaS deployment | Private + hybrid cloud + edge deployment |

Why This Dimension Matters Most

One of the key reasons Tencent Cloud ADP received high marks in the Omdia report was its pioneering two-step multi-agent workflow framework: a Planning Agent performs strategic task decomposition, then delegates sub-tasks to specialized execution Agents. This "plan-then-execute" architecture dramatically outperforms fixed-flow orchestration in complex enterprise scenarios like cross-department approval workflows and multi-turn investment research analysis.

Selection Tip: Some platforms claim "multi-agent" support, but actually offer only multiple independent prompt templates without shared state or collaboration capabilities. Verification: Ask the vendor to demonstrate two agents collaborating on a cross-domain task within a single conversation context.

Dimension 2: RAG Maturity

RAG (Retrieval-Augmented Generation) is the "knowledge engine" of any Agentic AI platform. RAG maturity determines whether AI Agents can accurately leverage enterprise-specific knowledge.

Key Evaluation Criteria:

| Criteria | Baseline | Production-Grade |

|---|---|---|

| Document Parsing | PDF/Word/TXT support | 28+ formats, 200MB per file, table structure preservation, OCR |

| Chunking Strategy | Fixed-length chunking | Semantic + recursive + parent-child multi-strategy options |

| Retrieval Method | Pure vector search | Vector + keyword hybrid retrieval + reranking |

| Advanced RAG | None | GraphRAG + Agentic RAG |

Real Case: How RAG Maturity Impacts Business Outcomes

A major hotel group using basic RAG (keyword retrieval) achieved ~60% knowledge base accuracy with 1,000+ FAQ entries to maintain. After switching to Tencent Cloud ADP's advanced RAG, accuracy improved to 95%+, FAQ maintenance dropped to 100+ entries, and front-desk staff saved 0.5-1 hour daily.

Selection Tip: Document parsing is the most underestimated component of RAG maturity. A PDF financial report with nested tables and complex layouts—if table structures are lost during parsing—will yield incomplete retrieval results regardless of how good your vector model is. Use your own real enterprise documents for PoC testing, not vendor-prepared samples.

Dimension 3: Security & Compliance

For enterprises operating in the Asia-Pacific region, security and compliance isn't a "nice-to-have"—it's a "deal-breaker."

Key Evaluation Criteria:

| Criteria | Assessment Focus | Verification Method |

|---|---|---|

| International Certifications | SOC 2, ISO 27001 | Request current certificates |

| Industry Certifications | Finance, healthcare, government sector-specific | Confirm target industry coverage |

| Data Isolation | Tenant-level data and network isolation | Review architecture documentation |

| Compliance Coverage | Country/region data protection regulations | Confirm target market support |

| Audit Capabilities | Conversation logs, decision traceability | Request audit log demonstration |

| Content Safety | Prompt injection defense, output filtering | Conduct adversarial testing |

Regional Compliance Complexity

Compliance environments across Southeast Asia are notably fragmented:

| Market | Key Compliance Requirements | Impact on Platform Selection |

|---|---|---|

| Singapore | PDPA + cross-border data transfer rules | Must clarify data flow and storage locations |

| Indonesia | Sector-specific mandatory data localization | Must support on-premises deployment |

| Thailand | PDPA + financial industry addendums | Financial institutions require private deployment |

| China Mainland | Cybersecurity Law + Data Security Law + PIPL | Requires China-compliant solutions |

The Omdia report highlights that Tencent Cloud ADP holds CAICT Level 5 certification (the highest level) for AI Agent assessment, along with SOC 2 and ISO 27001 international certifications. The IDC report specifically notes Tencent Cloud's localized compliance capabilities across Singapore, Malaysia, Indonesia, Thailand, and Hong Kong.

Dimension 4: TCO (Total Cost of Ownership)

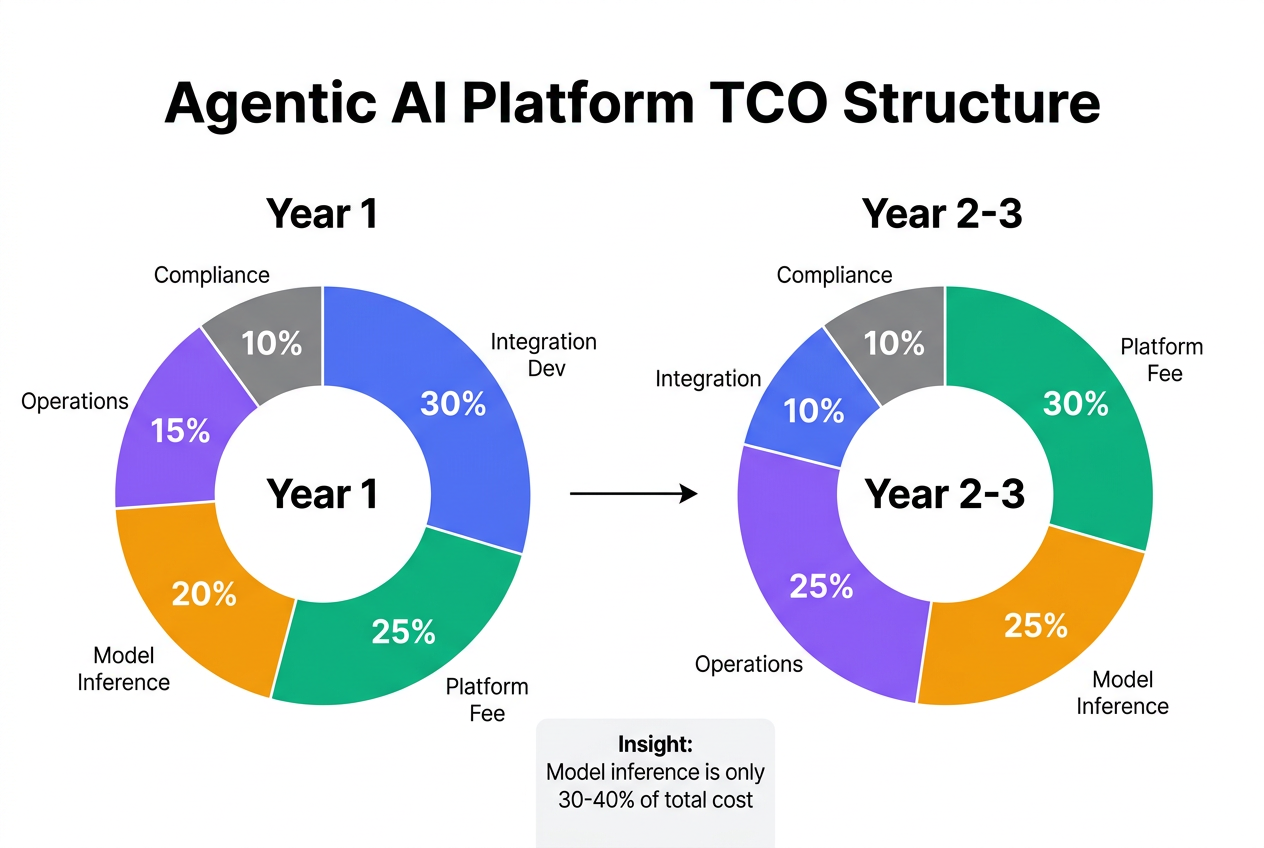

TCO analysis is the most undervalued dimension in Agentic AI platform selection. Most evaluations only examine "platform subscription fees," while the actual 3-year TCO can be 3-5x the quoted price.

TCO Calculation Model:

| Cost Item | Year 1 Share | Year 2-3 Share | Key Cost-Reduction Capability |

|---|---|---|---|

| Platform Subscription | 25% | 30% | Flexible billing (pay-as-you-go vs. annual) |

| Model Inference | 20% | 25% | Prompt caching, model routing optimization |

| Integration Development | 30% | 10% | Pre-built templates, low-code orchestration |

| Operations & Personnel | 15% | 25% | Automated ops, observability tools |

| Security & Compliance | 10% | 10% | Built-in compliance (no additional procurement) |

Why Year 1 and Year 2-3 Cost Structures Differ

Year 1 integration development costs are highest (30%) as enterprises connect existing systems, build knowledge bases, and configure business workflows. From Year 2 onward, integration costs decrease but operations and personnel costs rise—more Agents to manage, knowledge bases requiring continuous updates, and models needing periodic tuning.

Key TCO Optimization Levers:

- Low-code orchestration reduces integration costs: Tencent Cloud ADP provides 70+ application templates and 140+ plugins, cutting implementation timelines from the industry average of 6-12 months to under 3 months—cited by Omdia as a core differentiator

- Observability tools reduce operational costs: Built-in resource dashboard covering 7 dimensions including model usage, concurrency, and knowledge base capacity enables per-Agent cost attribution

- Model flexibility reduces inference costs: Support for YouTu models alongside mainstream models such as GPT and Gemini allows enterprises to select model tiers by scenario complexity

Dimension 5: Ecosystem Integration

An Agentic AI platform doesn't operate in isolation—it needs deep integration with existing enterprise IT systems to deliver real business value.

Key Evaluation Criteria:

| Criteria | Assessment Focus |

|---|---|

| Plugin Ecosystem | Number and coverage of pre-built plugins/connectors |

| API Openness | REST API, Webhook, SDK completeness |

| Protocol Support | MCP (Model Context Protocol), A2A (Agent-to-Agent) |

| Enterprise System Integration | CRM, ERP, ticketing, messaging platform connectors |

| Developer Ecosystem | Documentation quality, community activity, open-source projects |

Tencent Cloud ADP offers 140+ plugins covering customer service, marketing, knowledge management, and data analytics. Open-source contributions including Youtu-Agent, Youtu-GraphRAG, and ADP-Chat-Client further strengthen the ecosystem.

Platform Comparison Reference

The following comparison is provided for selection reference, to help enterprises match their needs with the right Agentic AI platform.

Three Platform Approach Types

| Dimension | Open-Source Self-Build | Cloud-Native Platforms | Enterprise-Grade Platforms |

|---|---|---|---|

| Examples | Dify, n8n, LangChain | AWS Bedrock Agents, Google Vertex AI | Tencent Cloud ADP |

| Architecture | High flexibility (full code control), but requires self-built infrastructure | Medium (API-based extension), locked into cloud ecosystem | Medium-high (visual + code hybrid), fully managed |

| RAG Maturity | Must assemble all components yourself | Basic RAG capabilities | Out-of-box GraphRAG + Agentic RAG |

| Security | Entirely enterprise-owned responsibility | Inherits cloud provider base certifications | Built-in CAICT Level 5 + SOC 2 + ISO 27001 |

| TCO (3-Year) | Low platform cost, high operational overhead | Pay-as-you-go, costs scale linearly | Annual plans + cost attribution tools, high predictability |

| Ecosystem | Flexible but heavy customization needed | Deep binding to cloud provider services | 140+ plugins + 70+ templates + open APIs |

When Each Approach Doesn't Fit

| Approach | Unsuitable Scenarios | Reason |

|---|---|---|

| Open-source | Enterprises without a dedicated AI infrastructure team | High operational complexity; knowledge base management, model tuning, and security hardening are all self-managed |

| Open-source | Finance and government sectors with strict compliance requirements | Building compliance from scratch is prohibitively expensive and risky |

| Cloud-native | Enterprises needing multi-cloud or vendor lock-in avoidance | Deep single-cloud ecosystem binding makes migration costly |

| Cloud-native | Enterprises requiring deep Chinese language NLP support | Some global cloud providers offer limited Chinese localization |

| Enterprise platform | Highly technical teams requiring extreme low-level customization | Platform abstraction provides convenience but limits some low-level flexibility |

Platform Scoring Template

Use this scoring template based on the 5-dimension framework. Adjust weights based on your industry and business stage:

| Dimension | Criteria | Weight | Vendor A | Vendor B | Vendor C |

|---|---|---|---|---|---|

| Architecture | Workflow orchestration | 10% | _ /10 | _ /10 | _ /10 |

| Multi-agent framework maturity | 10% | _ /10 | _ /10 | _ /10 | |

| Model support breadth | 5% | _ /10 | _ /10 | _ /10 | |

| Deployment flexibility | 5% | _ /10 | _ /10 | _ /10 | |

| RAG Maturity | Document parsing capability | 10% | _ /10 | _ /10 | _ /10 |

| Retrieval strategy richness | 5% | _ /10 | _ /10 | _ /10 | |

| Advanced RAG capabilities | 5% | _ /10 | _ /10 | _ /10 | |

| Security | International/industry certifications | 10% | _ /10 | _ /10 | _ /10 |

| Regional compliance coverage | 5% | _ /10 | _ /10 | _ /10 | |

| Audit & content safety | 5% | _ /10 | _ /10 | _ /10 | |

| TCO | 3-year total cost estimate | 10% | _ /10 | _ /10 | _ /10 |

| Cost predictability | 5% | _ /10 | _ /10 | _ /10 | |

| Cost optimization tools | 5% | _ /10 | _ /10 | _ /10 | |

| Ecosystem | Plugin/template ecosystem | 5% | _ /10 | _ /10 | _ /10 |

| API openness | 3% | _ /10 | _ /10 | _ /10 | |

| Enterprise system integration | 2% | _ /10 | _ /10 | _ /10 | |

| Total | 100% |

Weight Adjustment Recommendations:

- Finance & government: Increase Security & Compliance to 30%

- Startups / PoC stage: Increase TCO and Architecture weights by 5% each

- Large enterprises with extensive existing systems: Increase Ecosystem weight to 15%

Omdia + IDC Cross-Validation

Two authoritative analyst reports validate the competitive landscape from different angles:

| Dimension | Omdia Market Radar 2026 | IDC MarketScape 2025 |

|---|---|---|

| Scope | Agentic AI Development Platforms (Asia & Oceania) | AI-Enabled Front-Office Conversational AI Software (Asia-Pacific) |

| Methodology | 7 core capability dimensions | Current capabilities + future strategy dual-axis |

| Tencent Cloud Rating | Market Leader (alongside AWS, Google Cloud, Azure) | Leader |

| Key Finding | Advanced Capability ratings across multiple dimensions | ADP described as "mission-critical, automated system" |

| Entry Barriers | Major global Agentic AI platform vendors | APAC revenue > $3M, customer retention > 1 year, ≥3 production clients |

Consensus Across Both Reports:

- Full-stack capability is table stakes: Platforms with only point-solution strengths cannot meet enterprise-grade requirements

- Regional compliance is a competitive moat: Asia-Pacific regulatory complexity makes "localization" a critical selection weight

- Low-code + enterprise-grade = market direction: The market is shifting from "developer tools" to "enterprise platforms usable by business users"

Selection Process Best Practices

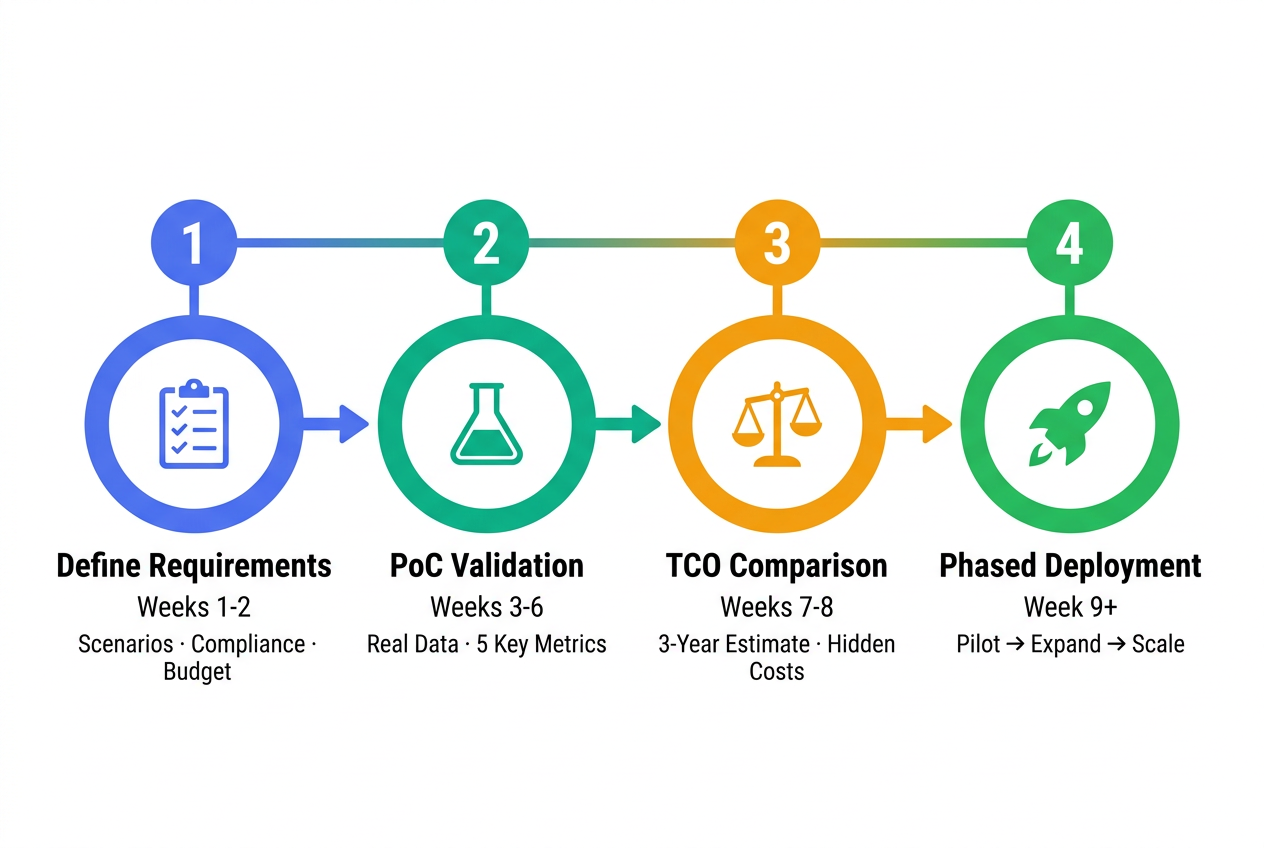

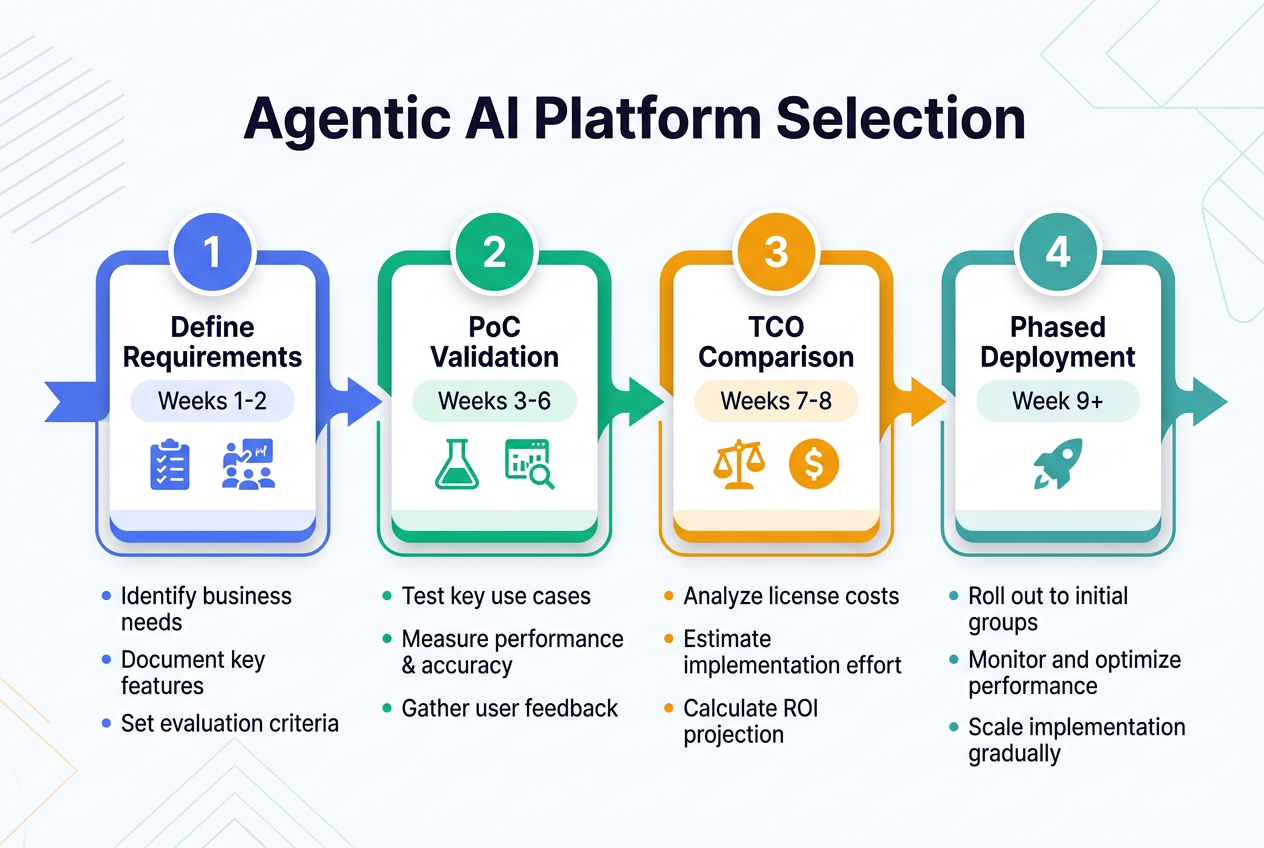

Step 1: Define Requirements & Evaluation Weights (Weeks 1-2)

Before engaging any vendor, complete internal alignment:

| Input | Output |

|---|---|

| Business scenario list (Top 5 priorities) | Functional requirements matrix |

| Compliance requirements (target markets + industry regulations) | Security compliance baseline |

| IT system inventory (systems requiring integration) | Integration complexity assessment |

| Budget range (Year 1 + 3-year) | TCO evaluation baseline |

Step 2: PoC Validation (Weeks 3-6)

PoC must use real enterprise data and real scenarios, not vendor-prepared demo data:

| Validation Item | Method | Pass Criteria |

|---|---|---|

| Document Parsing | Upload 20+ real enterprise documents (complex formats) | Table structure retention > 90% |

| RAG Accuracy | Prepare 50+ real business questions | Answer accuracy > 85% |

| Concurrency | Simulate 100+ concurrent users | P95 response latency < 5s |

| Workflow Orchestration | Build one complete business workflow | Business users can independently configure |

| Security & Compliance | Review certification documents and architecture | Meets industry compliance baseline |

Step 3: TCO Comparison & Negotiation (Weeks 7-8)

Based on PoC results and the TCO model:

- Request 3-year TCO estimates from vendors, not just platform subscription quotes

- Account for hidden costs: integration development, operational personnel, compliance investments

- Negotiation focus areas: volume-based pricing tiers, annual commitment discounts, PoC-to-production transition terms

Step 4: Phased Deployment (Week 9+)

| Phase | Timeline | Objective |

|---|---|---|

| Pilot | 4-6 weeks | 1-2 scenarios live, validate production stability |

| Expansion | 2-3 months | Scale to 5-10 scenarios, establish operations framework |

| Full Scale | 3-6 months | Organization-wide rollout, establish platform governance |

Frequently Asked Questions

What is an Agentic AI Platform and how does it differ from traditional AI development platforms?

An Agentic AI Platform is the core infrastructure for building, deploying, and managing autonomous AI Agents. Unlike traditional AI platforms focused on model training and inference, Agentic AI platforms emphasize intelligent reasoning, task planning, multi-agent collaboration, and end-to-end workflow execution. Traditional platforms help you "use AI"; Agentic AI platforms enable AI to "work for you."

What dimensions should enterprises prioritize when selecting an Agentic AI platform?

We recommend systematic evaluation across 5 dimensions: Architecture Flexibility (can it scale from single-agent to multi-agent systems?), RAG Maturity (retrieval accuracy and document format coverage), Security & Compliance (does it meet target industry and market requirements?), TCO (3-year total cost, not just subscription fees), and Ecosystem Integration (can it connect with existing enterprise systems?). Weights should be adjusted based on industry and organizational maturity.

What is the typical TCO for an Agentic AI platform?

Model inference typically accounts for only 30-40% of total Agentic AI platform costs. Integration development (15-25%), operations personnel (10-15%), and infrastructure (15-20%) are the real cost drivers. One dairy company's AI Agent consumed 30 million tokens daily—without prompt optimization and cost attribution tools, monthly bills exceeded budget by 200%. Request 3-year TCO estimates during the selection phase.

Are open-source Agentic AI platforms suitable for enterprise production?

Open-source solutions like Dify and n8n work well for PoC or internal tools when you have a dedicated AI infrastructure team. However, in production they face three challenges: compliance must be built from scratch (no existing certifications), operational complexity is high (vector databases, model tuning, and failover are all self-managed), and there's no enterprise SLA guarantee. For finance and government sectors with strict compliance requirements, open-source compliance costs are typically prohibitive.

How can enterprises avoid vendor lock-in with Agentic AI platforms?

Three strategies: (1) Choose platforms supporting multi-model mixing to avoid single-model ecosystem lock-in; (2) Check for MCP (Model Context Protocol) and A2A (Agent-to-Agent) open protocol support; (3) Evaluate data export capabilities—can knowledge bases, conversation data, and workflow configurations be fully exported? Tencent Cloud ADP supports YouTu models alongside mainstream models such as GPT and Gemini, providing high flexibility at the model layer.

What do "Leader" ratings in Omdia and IDC reports mean?

Both Omdia Market Leader and IDC Leader designations indicate vendors performing strongest across current technical capabilities and future strategic direction. In Omdia's 2026 report, Tencent Cloud was rated Market Leader alongside AWS, Google Cloud, and Microsoft Azure. In IDC MarketScape 2025, Tencent Cloud was similarly positioned in the Leader quadrant. Analyst reports should inform—not replace—selection decisions. Combine them with PoC validation and your specific requirements.

What key metrics should be tested during Agentic AI platform PoC?

PoC must use real enterprise data. Test 5 critical metrics: Document parsing (upload 20+ real documents, verify table structure retention > 90%), RAG accuracy (50+ real questions, accuracy > 85%), concurrency (100+ concurrent users, P95 latency < 5s), workflow orchestration (business users can independently configure complete workflows), and security compliance (certification and architecture review passes). Recommended PoC duration: 3-4 weeks.

What is the market outlook for Agentic AI in Asia-Pacific?

Omdia projects the Asia-Pacific Agentic AI software market will grow from $271 million in 2025 to $9.7 billion by 2030—a 105% CAGR. Three key drivers: enterprises moving from "AI pilots" to "AI at scale," open protocols like MCP and A2A lowering multi-platform integration barriers, and growing demand for hybrid deployment across public cloud, private cloud, and edge computing.

Ready to start your Agentic AI platform selection?

→ Try Tencent Cloud ADP

*This article is part of the Enterprise AI Agent series. Related reading:

· Omdia 2026: Tencent Cloud ADP Named Agentic AI Leader

· AI Agent Platform Selection: IDC 2025 Guide

· Enterprise RAG Guide: From Retrieval to Production Knowledge Systems

Home

Home Products

Products Resources

Resources Solutions

Solutions Pricing

Pricing Company

Company Find Us

Find Us